问题描述:练习写了一个mapReduce的程序,运行报错如下,因为是初学不知道该怎么解决,先是在网上找了很多,但是都一一排除了原因,目前还没有解决的,源码已经贴在下面,求大神指导!代码目的:统计hello文件中各个单词出现的次数报错信息如下:/opt/jdk1.8.0_191/bin/java -javaagent:/opt/idea-IC-182.4892.20/lib/idea_rt.jar=39521:/opt/idea-IC-182.4892.20/bin -Dfile.encoding=UTF-8 -classpath /opt/jdk1.8.0_191/jre/lib/charsets.jar:/opt/jdk1.8.0_191/jre/lib/deploy.jar:/opt/jdk1.8.0_191/jre/lib/ext/cldrdata.jar:/opt/jdk1.8.0_191/jre/lib/ext/dnsns.jar:/opt/jdk1.8.0_191/jre/lib/ext/jaccess.jar:/opt/jdk1.8.0_191/jre/lib/ext/jfxrt.jar:/opt/jdk1.8.0_191/jre/lib/ext/localedata.jar:/opt/jdk1.8.0_191/jre/lib/ext/nashorn.jar:/opt/jdk1.8.0_191/jre/lib/ext/sunec.jar:/opt/jdk1.8.0_191/jre/lib/ext/sunjce_provider.jar:/opt/jdk1.8.0_191/jre/lib/ext/sunpkcs11.jar:/opt/jdk1.8.0_191/jre/lib/ext/zipfs.jar:/opt/jdk1.8.0_191/jre/lib/javaws.jar:/opt/jdk1.8.0_191/jre/lib/jce.jar:/opt/jdk1.8.0_191/jre/lib/jfr.jar:/opt/jdk1.8.0_191/jre/lib/jfxswt.jar:/opt/jdk1.8.0_191/jre/lib/jsse.jar:/opt/jdk1.8.0_191/jre/lib/management-agent.jar:/opt/jdk1.8.0_191/jre/lib/plugin.jar:/opt/jdk1.8.0_191/jre/lib/resources.jar:/opt/jdk1.8.0_191/jre/lib/rt.jar:/home/user/workspaces/practice/mysecondmapreduce/out/production/mysecondmapreduce:/home/user/下载/hadoop-2.7.2/share/hadoop/common/hadoop-nfs-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/hadoop-common-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/hadoop-common-2.7.2-tests.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/xz-1.0.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/asm-3.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/avro-1.7.4.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/gson-2.2.4.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/junit-4.11.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jsch-0.1.42.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jsp-api-2.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/xmlenc-0.52.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/guava-11.0.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jets3t-0.9.0.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jettison-1.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jetty-6.1.26.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jsr305-3.0.0.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/log4j-1.2.17.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/paranamer-2.3.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/activation-1.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-io-2.4.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/httpcore-4.2.5.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jaxb-api-2.2.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/stax-api-1.0-2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-cli-1.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-net-3.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jersey-core-1.9.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jersey-json-1.9.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/servlet-api-2.5.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/zookeeper-3.4.6.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-lang-2.6.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/httpclient-4.2.5.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/slf4j-api-1.7.10.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-codec-1.4.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/hadoop-auth-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/hamcrest-core-1.3.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jackson-xc-1.9.13.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jaxb-impl-2.2.3-1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jersey-server-1.9.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jetty-util-6.1.26.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/mockito-all-1.8.5.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/netty-3.6.2.Final.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/api-util-1.0.0-M20.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-math3-3.1.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/java-xmlbuilder-0.4.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/protobuf-java-2.5.0.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/snappy-java-1.0.4.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-digester-1.8.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/curator-client-2.7.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jackson-jaxrs-1.9.13.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-logging-1.1.3.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/curator-recipes-2.7.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/api-asn1-api-1.0.0-M20.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-compress-1.4.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-httpclient-3.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/apacheds-i18n-2.0.0-M15.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-beanutils-1.7.0.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/curator-framework-2.7.1.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jackson-core-asl-1.9.13.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/hadoop-annotations-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-collections-3.2.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-configuration-1.6.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/jackson-mapper-asl-1.9.13.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/commons-beanutils-core-1.8.0.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/htrace-core-3.1.0-incubating.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/common/lib/apacheds-kerberos-codec-2.0.0-M15.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/hdfs/hadoop-hdfs-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/hdfs/hadoop-hdfs-nfs-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/hdfs/hadoop-hdfs-2.7.2-tests.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-app-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-core-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-common-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-shuffle-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-hs-plugins-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/mapreduce/hadoop-mapreduce-client-jobclient-2.7.2-tests.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-api-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-client-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-common-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-registry-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-tests-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-common-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-web-proxy-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-nodemanager-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-resourcemanager-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-sharedcachemanager-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-applications-distributedshell-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-server-applicationhistoryservice-2.7.2.jar:/home/user/下载/hadoop-2.7.2/share/hadoop/yarn/hadoop-yarn-applications-unmanaged-am-launcher-2.7.2.jar mapreduce.WordCount

2019-01-18 17:01:58,772 WARN [main] util.NativeCodeLoader (NativeCodeLoader.java:<clinit>(62)) - Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

2019-01-18 17:01:59,103 INFO [main] Configuration.deprecation (Configuration.java:warnOnceIfDeprecated(1173)) - session.id is deprecated. Instead, use dfs.metrics.session-id

2019-01-18 17:01:59,104 INFO [main] jvm.JvmMetrics (JvmMetrics.java:init(76)) - Initializing JVM Metrics with processName=JobTracker, sessionId=

2019-01-18 17:01:59,891 WARN [main] mapreduce.JobResourceUploader (JobResourceUploader.java:uploadFiles(64)) - Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

2019-01-18 17:01:59,915 WARN [main] mapreduce.JobResourceUploader (JobResourceUploader.java:uploadFiles(171)) - No job jar file set. User classes may not be found. See Job or Job#setJar(String).

2019-01-18 17:01:59,925 INFO [main] input.FileInputFormat (FileInputFormat.java:listStatus(283)) - Total input paths to process : 1

2019-01-18 17:02:00,025 INFO [main] mapreduce.JobSubmitter (JobSubmitter.java:submitJobInternal(198)) - number of splits:1

2019-01-18 17:02:00,236 INFO [main] mapreduce.JobSubmitter (JobSubmitter.java:printTokens(287)) - Submitting tokens for job: job_local1743862088_0001

2019-01-18 17:02:00,792 INFO [main] mapreduce.Job (Job.java:submit(1294)) - The url to track the job: http://localhost:8080/

2019-01-18 17:02:00,793 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1339)) - Running job: job_local1743862088_0001

2019-01-18 17:02:00,794 INFO [Thread-14] mapred.LocalJobRunner (LocalJobRunner.java:createOutputCommitter(471)) - OutputCommitter set in config null

2019-01-18 17:02:00,798 INFO [Thread-14] output.FileOutputCommitter (FileOutputCommitter.java:<init>(100)) - File Output Committer Algorithm version is 1

2019-01-18 17:02:00,802 INFO [Thread-14] mapred.LocalJobRunner (LocalJobRunner.java:createOutputCommitter(489)) - OutputCommitter is org.apache.hadoop.mapreduce.lib.output.FileOutputCommitter

2019-01-18 17:02:00,924 INFO [Thread-14] mapred.LocalJobRunner (LocalJobRunner.java:runTasks(448)) - Waiting for map tasks

2019-01-18 17:02:00,926 INFO [LocalJobRunner Map Task Executor #0] mapred.LocalJobRunner (LocalJobRunner.java:run(224)) - Starting task: attempt_local1743862088_0001_m_000000_0

2019-01-18 17:02:01,007 INFO [LocalJobRunner Map Task Executor #0] output.FileOutputCommitter (FileOutputCommitter.java:<init>(100)) - File Output Committer Algorithm version is 1

2019-01-18 17:02:01,037 INFO [LocalJobRunner Map Task Executor #0] mapred.Task (Task.java:initialize(612)) - Using ResourceCalculatorProcessTree : [ ]

2019-01-18 17:02:01,045 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:runNewMapper(756)) - Processing split: file:/home/user/project/hello:0+19

2019-01-18 17:02:01,170 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:setEquator(1205)) - (EQUATOR) 0 kvi 26214396(104857584)

2019-01-18 17:02:01,170 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(998)) - mapreduce.task.io.sort.mb: 100

2019-01-18 17:02:01,171 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(999)) - soft limit at 83886080

2019-01-18 17:02:01,171 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(1000)) - bufstart = 0; bufvoid = 104857600

2019-01-18 17:02:01,171 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:init(1001)) - kvstart = 26214396; length = 6553600

2019-01-18 17:02:01,184 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:createSortingCollector(403)) - Map output collector class = org.apache.hadoop.mapred.MapTask$MapOutputBuffer

2019-01-18 17:02:01,187 INFO [LocalJobRunner Map Task Executor #0] mapred.MapTask (MapTask.java:flush(1460)) - Starting flush of map output

2019-01-18 17:02:01,196 INFO [Thread-14] mapred.LocalJobRunner (LocalJobRunner.java:runTasks(456)) - map task executor complete.

2019-01-18 17:02:01,197 WARN [Thread-14] mapred.LocalJobRunner (LocalJobRunner.java:run(560)) - job_local1743862088_0001

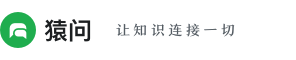

java.lang.Exception: java.io.IOException: Type mismatch in key from map: expected org.apache.hadoop.io.Text, received org.apache.hadoop.io.LongWritable

at org.apache.hadoop.mapred.LocalJobRunner$Job.runTasks(LocalJobRunner.java:462)

at org.apache.hadoop.mapred.LocalJobRunner$Job.run(LocalJobRunner.java:522)

Caused by: java.io.IOException: Type mismatch in key from map: expected org.apache.hadoop.io.Text, received org.apache.hadoop.io.LongWritable

at org.apache.hadoop.mapred.MapTask$MapOutputBuffer.collect(MapTask.java:1072)

at org.apache.hadoop.mapred.MapTask$NewOutputCollector.write(MapTask.java:715)

at org.apache.hadoop.mapreduce.task.TaskInputOutputContextImpl.write(TaskInputOutputContextImpl.java:89)

at org.apache.hadoop.mapreduce.lib.map.WrappedMapper$Context.write(WrappedMapper.java:112)

at org.apache.hadoop.mapreduce.Mapper.map(Mapper.java:125)

at mapreduce.WordCount$WordCountMapper.map(WordCount.java:21)

at mapreduce.WordCount$WordCountMapper.map(WordCount.java:18)

at org.apache.hadoop.mapreduce.Mapper.run(Mapper.java:146)

at org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:787)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:341)

at org.apache.hadoop.mapred.LocalJobRunner$Job$MapTaskRunnable.run(LocalJobRunner.java:243)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:511)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2019-01-18 17:02:01,794 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1360)) - Job job_local1743862088_0001 running in uber mode : false

2019-01-18 17:02:01,795 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1367)) - map 0% reduce 0%

2019-01-18 17:02:01,797 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1380)) - Job job_local1743862088_0001 failed with state FAILED due to: NA

2019-01-18 17:02:01,802 INFO [main] mapreduce.Job (Job.java:monitorAndPrintJob(1385)) - Counters: 0

Process finished with exit code 0源码如下:package mapreduce;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import java.io.IOException;

public class WordCount {

// Hadoop中特带的两种类型,Text和LongWritable

public static class WordCountMapper extends Mapper<LongWritable,Text,Text,LongWritable>{

@Override

protected void map(LongWritable key,Text value,Context context) throws IOException, InterruptedException {

super.map(key,value,context);

// 这里的value是Text类型的,而split方法是针对string类型的,所以这里先进行类型转换再split操作

String[] splits=value.toString().split("\\s");

for (String word:splits) {

// 遍历一行中的每个单词,出现次数记为1

context.write(new Text(word),new LongWritable(1));

}

}

}

public static class WordCountReducer extends Reducer<Text,LongWritable,Text,LongWritable>{

@Override

protected void reduce(Text word, Iterable<LongWritable> times, Context context) throws IOException, InterruptedException {

super.reduce(word, times, context);

long sum=0L;

for (LongWritable time:times) {

// 记住:将LongWritable转换成long型再进行计算

sum+=time.get();

}

context.write(word,new LongWritable(sum));

}

}

public static void main(String[] args) throws Exception{

// 记住:新建一个configuration对象,导入的包是:org.apache.hadoop.conf.Configuration

Configuration conf=new Configuration();

// new一个Job对象

Job job=new Job(conf);

// 创建Job名称

job.setJobName(WordCountApp.class.getSimpleName());

job.setJarByClass(WordCountApp.class);

// 创建Map,Reduce和打包

job.setMapperClass(WordCountMapper.class);

job.setReducerClass(WordCountReducer.class);

// 记住:reduce输出的类型一定要设置

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

// 设置输入、输出路径

FileInputFormat.addInputPaths(job,"/home/user/project/hello");

FileOutputFormat.setOutputPath(job,new Path("/home/user/project/out"));

// 等待程序运行完,提交代码到yarn上

job.waitForCompletion(true);

}

}hello文件如下:hello you

hello me

- 3 回答

- 0 关注

- 1815 浏览

添加回答

举报

0/150

提交

取消